🔥 Discover this must-read post from TechCrunch 📖

📂 **Category**: AI,Anthropic,Max Tegmark,OpenAI,pentagon,The Pro-Human Declaration

✅ **What You’ll Learn**:

While Washington’s break with Anthropic has exposed the complete lack of any coherent rules governing AI, a bipartisan coalition of thinkers has put together something the government has so far refused to produce: a framework for what responsible AI development should actually look like.

The pro-humanity declaration was finalized before the showdown between the Pentagon and the Anthropists last week, but the collision of the two events was not lost on anyone involved.

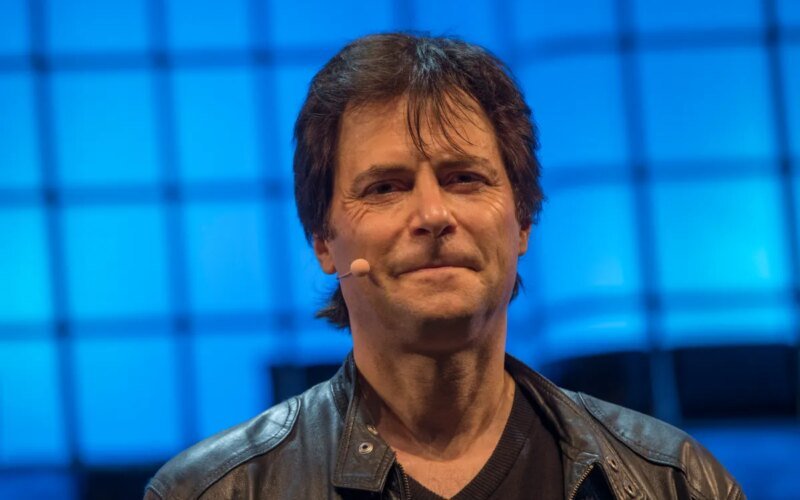

“There is something very remarkable that has happened in America in just the last four months,” Max Tegmark, an MIT physicist and artificial intelligence researcher who helped organize the effort, said in a conversation with this editor. “Voting suddenly [is showing] That 95% of all Americans oppose the unregulated race toward superintelligence.

The newly published document, signed by hundreds of experts, former officials and public figures, begins with the nonsensical observation that humanity is at a crossroads. One path, which the Declaration calls the “race to replace,” leads to the replacement of humans first as workers, then as decision-makers, with power accumulating in unaccountable institutions and their machines. The other leads to artificial intelligence that greatly expands human potential.

The last scenario relies on five basic pillars: keeping humans in charge, avoiding concentration of power, protecting the human experience, preserving individual freedom, and holding artificial intelligence companies legally accountable. Among its most powerful provisions is an outright ban on the development of superintelligence until there is scientific consensus that it can be done safely and with truly democratic acceptance. Mandatory shutdown on powerful systems; and prohibit infrastructures capable of self-replication, independent self-improvement, or resistance to shutdown.

The issuance of the announcement coincides with a period that makes it easy to appreciate its urgency. On the last Friday of February, Defense Secretary Pete Hegseth designated Anthropic — whose AI already runs on classified military platforms — a “supply chain risk” after the company refused to give the Pentagon unlimited use of its technology, a designation typically reserved for companies with ties to China. Hours later, OpenAI struck its own deal with the Department of Defense, a deal that legal experts say will be difficult to implement in any meaningful way. What all of this has revealed is how costly Congressional inaction on AI has become.

As Dean Paul, a senior fellow at the Foundation for American Innovation, told the New York Times afterward: “This is not just a dispute over a contract. This is the first conversation we’ve had as a country about controlling AI systems.”

TechCrunch event

San Francisco, California

|

October 13-15, 2026

Tegmark came up with an analogy that most people could understand when we talked. “You never have to worry that some drug company is going to release some other drug that causes serious harm before people figure out how to make it safe, because the FDA won’t let them release anything until it’s safe enough,” he said.

Turf wars in Washington rarely generate the kind of public pressure that changes laws. Instead, Tegmark sees child safety as the pressure point most likely to break the current impasse. In fact, the announcement calls for mandatory pre-deployment testing of AI products – especially chatbots and companion apps aimed at younger users – to cover risks including increased suicidal ideation, worsening mental health conditions, and emotional manipulation.

“If there’s a creepy old man texting an 11-year-old pretending to be a little girl and trying to convince that boy to commit suicide, the guy could go to prison for that,” Tegmark said. “We already have laws. It’s illegal. So why is it any different if a machine is doing it?”

He believes that once the principle of pre-release testing of children’s products is established, the scope will inevitably expand. “People will come and say – let’s add some other requirements. Maybe we should also test that this can’t help terrorists make biological weapons. Maybe we should test to make sure that superintelligence doesn’t have the ability to overthrow the US government.”

It is no small feat that former Trump adviser Steve Bannon and Susan Rice, President Obama’s national security adviser, signed the same document — along with former Chairman of the Joint Chiefs of Staff Mike Mullen and progressive religious leaders.

“What they agree on, of course, is that they are all human,” says Tegmark. “If it comes down to whether we want a future for humans or a future for machines, they will almost certainly be on the same side.”

🔥 **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#roadmap #artificial #intelligence #listen**

🕒 **Posted on**: 1772951323

🌟 **Want more?** Click here for more info! 🌟