🚀 Read this trending post from TechCrunch 📖

📂 **Category**: AI,Anthropic,claude code,code review,Exclusive,vibe coding

💡 **What You’ll Learn**:

When it comes to programming, peer feedback is crucial for catching bugs early, maintaining consistency across the code base, and improving overall software quality.

The emergence of “dynamic coding” — using AI tools that take instructions presented in simple language and quickly generate large amounts of code — has changed the way developers work. Although these tools sped up the development process, they also introduced new bugs, security risks, and incomprehensible code.

Anthropic’s solution is an AI reviewer designed to detect errors before they reach the software database. The new product, called Code Review, was launched Monday at Claude Code.

“We’ve seen a lot of growth in Cloudcode, especially within the enterprise, and one of the questions we’re constantly getting from enterprise leaders is: Now that Cloudcode has put out a bunch of pull requests, how can I make sure those requests are reviewed in an efficient way?” Kat Wu, head of product at Anthropic, told TechCrunch.

Pull requests are a mechanism used by developers to submit code changes for review before those changes enter the software. Wu said CloudCode has dramatically increased code production, which has led to an increase in pull request reviews that has caused a bottleneck in shipping code.

“Code review is our answer to that,” Wu said.

Anthropic’s launch of Code Review — which arrives first to Claude for Teams and Claude for Enterprise customers in Search Preview — comes at a pivotal moment for the company.

TechCrunch event

San Francisco, California

|

October 13-15, 2026

On Monday, Anthropic filed two lawsuits against the Department of Defense in response to the agency’s designation of Anthropic as a supply chain risk. The dispute is likely to leave Anthropic relying more heavily on its booming business, which has seen subscriptions quadruple since the start of the year. Claude Code’s run rate revenue has exceeded $2.5 billion since launch, according to the company.

“This product is largely aimed at our enterprise users at scale, so companies like Uber, Salesforce, and Accenture, who are already using Claude Code and now want to help with the huge amount of… [pull requests] “It helps with production,” Wu said.

Developer leaders can turn code review on by default for every engineer on the team, she added. Once enabled, it integrates with GitHub and automatically analyzes pull requests, leaving comments directly on the code pointing out potential issues and suggested fixes.

Wu said the focus is on fixing logical errors rather than style.

“This is really important because a lot of developers have seen automated AI feedback before, and they get upset when it’s not immediately actionable,” Wu said. “We decided that we would only focus on logical bugs. That way we would pick up the highest priority things to fix.”

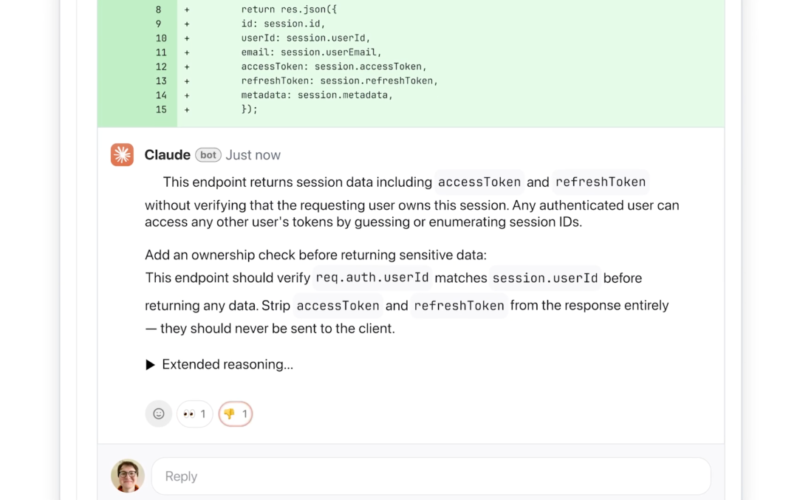

The AI explains its causes step-by-step, explaining what the problem is, why it might be a problem, and how it can be fixed. The system will classify the severity of issues using colors: red for highest severity, yellow for potential issues worth reviewing, and purple for issues related to pre-existing code or historical bugs.

It does this quickly and efficiently by relying on multiple agents working in parallel, with each agent examining the code base from a different perspective or dimension, Wu said. The final agent aggregates and sorts the results, removing duplicates and prioritizing what is most important.

The tool provides lightweight security analysis, and engineering leads can customize additional checks based on internal best practices. Anthropic’s recently launched Claude Code Security software provides deeper security analysis, Wu said.

The multi-agent architecture means this can be a resource-intensive product, Wu said. Similar to other AI services, pricing is based on codes, and the cost varies depending on the complexity of the code — though Wu estimated that each review would cost $15 to $25 on average. She added that it is a distinct and necessary experience because artificial intelligence tools generate more and more code.

“[Code Review] “It’s something that comes from a tremendous amount of traction in the market,” Wu said. “As engineers develop with CloudCode, they see friction to create a new feature [decrease]and they are seeing a much higher demand for code review. So we hope that through this we can enable organizations to build faster than ever before, and with far fewer errors than they did before.

🔥 **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#Anthropic #launches #code #review #tool #verify #flow #AIgenerated #code**

🕒 **Posted on**: 1773088101

🌟 **Want more?** Click here for more info! 🌟