🚀 Explore this trending post from Hacker News 📖 📂 **Category**: ✅ **What You’ll Learn**: Disclaimer: this post has been written without AI. (Oh how the turns have tabled… (╯°□°)╯︵ ┻━┻ ) AI coding agents dogs are our best friends! I have lots of them. Going for walks with them every day and trying to get them to perform neat tricks. However, sometimes they misbehave and they don’t do the tricks we want them to do. This bad behaviour often comes from distractions from the environment around us. After all, our dogs can perform best when they are hyper-focused on…

✨ Read this awesome post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: Steel Bank Common Lisp (SBCL) is a high performance Common Lisp compiler. It is open source / free software, with a permissive license. In addition to the compiler and runtime system for ANSI Common Lisp, it provides an interactive environment including a debugger, a statistical profiler, a code coverage tool, and many other extensions.SBCL runs on Linux, various BSDs, macOS, Solaris, and Windows. See the download page for supported platforms, and getting started guide for additional help.The most recent version is SBCL 2.6.1, released January…

🚀 Explore this insightful post from Hacker News 📖 📂 **Category**: ✅ **What You’ll Learn**: February 24, 2026 PRESS RELEASE Apple accelerates U.S. manufacturing, with Mac mini production coming later this year Mac mini will be made at a new facility in Houston, and a soon-to-be-launched training center will support advanced manufacturing skills development CUPERTINO, CALIFORNIA Apple today announced a significant expansion of factory operations in Houston, bringing the future production of Mac mini to the U.S. for the first time. The company will also expand advanced AI server manufacturing at the factory and provide hands-on training at its new Advanced Manufacturing Center…

💥 Check out this trending post from Hacker News 📖 📂 **Category**: ✅ **What You’ll Learn**: The other half of the context problem. Every MCP tool call in Claude Code dumps raw data into your 200K context window. A Playwright snapshot costs 56 KB. Twenty GitHub issues cost 59 KB. One access log — 45 KB. After 30 minutes, 40% of your context is gone. Inspired by Cloudflare's Code Mode — which compresses tool definitions from millions of tokens into ~1,000 — we asked: what about the other direction? Context Mode is an MCP server that sits between Claude Code…

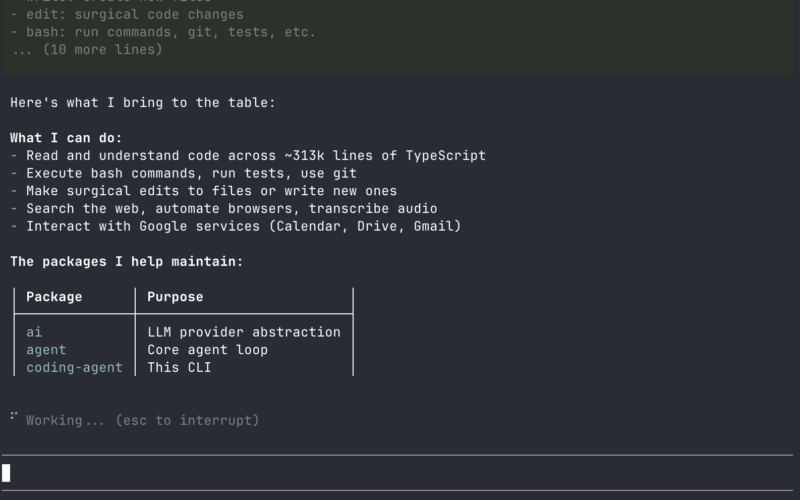

🚀 Explore this awesome post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: There are many coding agents, but this one is mine. $ npm install -g @mariozechner/pi-coding-agent this.textContent="Copy", 1500)">Copy About Why pi? Pi is a minimal terminal coding harness. Adapt pi to your workflows, not the other way around. Extend it with TypeScript extensions, skills, prompt templates, and themes. Bundle them as pi packages and share via npm or git. Pi ships with powerful defaults but skips features like sub-agents and plan mode. Ask pi to build what you want, or install a package that does it…

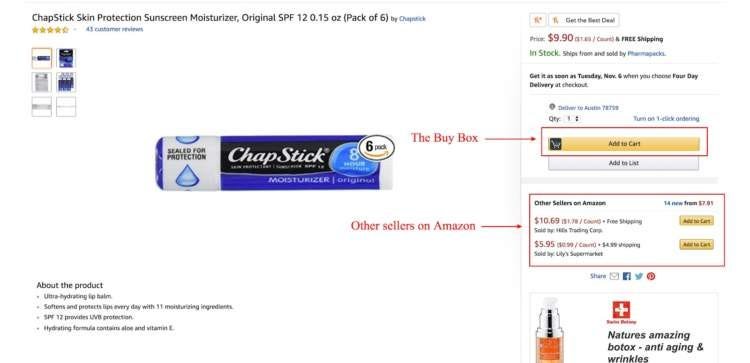

🚀 Read this awesome post from Hacker News 📖 📂 **Category**: ✅ **What You’ll Learn**: Yesterday, California Attorney General Rob Bonta filed for an immediate halt to what he says is a widespread price-fixing scheme run by the largest online retailer in America, Amazon. “Amazon tells vendors what prices it wants to see to maintain its own profitability,” Bonta alleged. “Amazon can do this because it is the world’s largest, most powerful online retailer.” His claim is that Amazon has been forcing vendors who sell on and off the platform to raise prices, and cooperating with other major online retailers…

✨ Explore this awesome post from Hacker News 📖 📂 **Category**: ✅ **What You’ll Learn**: Anthropic, the wildly successful AI company that has cast itself as the most safety-conscious of the top research labs, is dropping the central pledge of its flagship safety policy, company officials tell TIME.In 2023, Anthropic committed to never train an AI system unless it could guarantee in advance that the company’s safety measures were adequate. For years, its leaders touted that promise—the central pillar of their Responsible Scaling Policy (RSP)—as evidence that they are a responsible company that would withstand market incentives to rush to…

✨ Check out this must-read post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: Capybara is a unified visual creation model, i.e., a powerful visual generation and editing framework designed for high-quality visual synthesis and manipulation tasks. The framework leverages advanced diffusion models and transformer architectures to support versatile visual generation and editing capabilities with precise control over content, motion, and camera movements. Key Features: 🎬 Multi-Task Support: Supports Text-to-Video (T2V), Text-to-Image (T2I), Instruction-based Video-to-Video (TV2V), Instruction-based Image-to-Image (TI2I), and various editing tasks 🚀 High Performance: Built with distributed inference support for efficient multi-GPU processing [2026.02.20] 🎨 Added…

✨ Read this must-read post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: Who we are About Corgi Labs Corgi Labs uses proprietary AI to optimize payment acceptance, boosting revenue through superior fraud prevention. We are data-driven with an explainable AI approach for transparency About the team We’re looking for Founder's Associates (2 roles: 1 in US and 1 in Singapore) to work closely with the founders at Corgi Labs. This role is for someone who enjoys bringing structure to chaos and owning operational details end-to-end. You’ll sit at the center of the business, across internal ops, external…

🚀 Read this awesome post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: The fastest reasoning LLM, powered by diffusionToday, we're introducing Mercury 2 — the world's fastest reasoning language model, built to make production AI feel instant.Why speed matters more nowProduction AI isn't one prompt and one answer anymore. It's loops: agents, retrieval pipelines, and extraction jobs running in the background at volume. In loops, latency doesn’t show up once. It compounds across every step, every user, every retry.Yet current LLMs still share the same bottleneck: autoregressive, sequential decoding. One token at a time, left to right.A…