🚀 Explore this trending post from TechCrunch 📖

📂 **Category**: AI

💡 **What You’ll Learn**:

Flash floods are among the world’s deadliest weather events, killing more than 5,000 people each year. It is also one of the most difficult things to predict. But Google believes it has solved this problem in an unlikely way, by reading the news.

While humans have collected a lot of weather data, flash floods are too short-lived and local to be measured comprehensively, in the same way that temperature or even river flows are monitored over time. This data gap means that deep learning models, which are increasingly capable of predicting weather, are unable to predict flash floods.

To solve this problem, Google researchers used Gemini – Google’s large language model – to sort through 5 million news articles from around the world, isolate reports of 2.6 million different floods, and convert those reports into a geotagged time series called “ground source.” It’s the first time the company has used language models for this kind of work, according to Gila Lewicki, Google Research product manager. The research and data set was shared publicly on Thursday morning.

Using Groundsource as a real-world baseline, the researchers trained a model built on a Long Short-Term Memory (LSTM) neural network to assimilate global weather forecasts and generate the probability of flash floods in a given area.

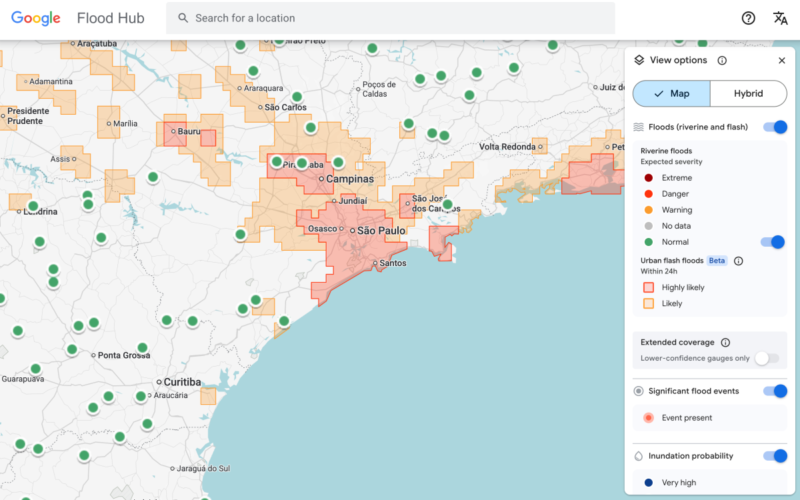

Google’s flash flood forecasting model now highlights risks to urban areas in 150 countries on the company’s Flood Hub platform, and shares its data with emergency response agencies around the world. Antonio Jose Belleza, an emergency response officer at the Southern African Development Community who piloted the forecast model with Google, said it helped his organization respond to floods more quickly.

There are still limitations to the model. First, it is fairly low resolution, identifying risks across areas of 20 square kilometres. It’s not as accurate as the US National Weather Service’s flood warning system, partly because the Google model doesn’t include local radar data, which enables real-time rainfall tracking.

Part of the idea, however, is that the project is designed to work in places where local governments can’t afford to invest in expensive weather-sensing infrastructure or don’t have extensive records of meteorological data.

TechCrunch event

San Francisco, California

|

October 13-15, 2026

“Because we collect millions of reports, the Groundsource dataset actually helps rebalance the map,” Juliet Rotenberg, a program manager on Google’s Resilience team, told reporters this week. “It enables us to extrapolate to other areas where there isn’t a lot of information.”

Rotenberg said the team hopes that using MSc to develop quantitative datasets from written qualitative sources can be applied to efforts to build datasets on other ephemeral but important phenomena for forecasting, such as heat waves and mudslides.

Google’s contribution is part of a growing effort to aggregate data for deep-learning-based weather forecasting models, said Marshall Moutenot, CEO of Upstream Tech, a company that uses similar deep learning models to forecast river flows for clients such as hydropower companies. Mutinot co-founded dynamical.org, a group that curates a collection of machine-learning-ready weather data for researchers and startups.

“Data scarcity is one of the most difficult challenges in geophysics,” Moutenot said. “At the same time, there’s a lot of ground data out there, and when you want to evaluate against the truth, there’s not enough. This was a really innovative approach to getting that data.”

💬 **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#Google #news #reports #artificial #intelligence #predict #flash #floods**

🕒 **Posted on**: 1773312812

🌟 **Want more?** Click here for more info! 🌟