🚀 Check out this insightful post from TechCrunch 📖

📂 **Category**: AI,Exclusive,Guide Labs,science

📌 **What You’ll Learn**:

The challenge of wrangling a deep learning model is often in understanding why it does what it does: whether it’s xAI’s repeated struggle sessions to fine-tune Grok’s weird policies, ChatGPT’s struggle with adulation, or ordinary hallucinations, delving into a neural network with billions of parameters is no easy feat.

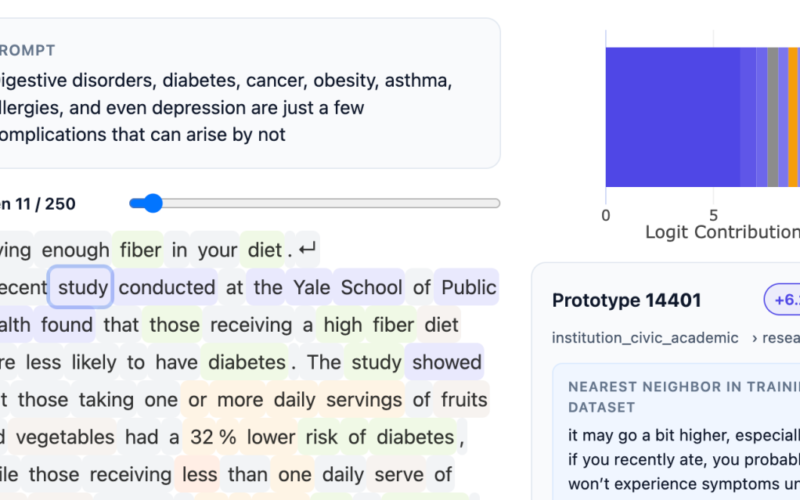

Guide Labs, a San Francisco startup founded by CEO Julius Adebayo and Chief Scientific Officer Aya Abdulsalam Ismail, offers an answer to this problem today. On Monday, the company open sourced an 8-billion-parameter LLM, Steerling-8B, trained using a new architecture designed to make its actions easily interpretable: every token produced by the model can be traced back to its origins in the LLM training data.

This can be as simple as identifying reference material for the facts the model cites, or as complex as understanding the model’s understanding of humor or sex.

“If I have a trillion ways to encode sex, and I encode them in a billion of my trillion things, you have to make sure you find all those billion things that I have encoded, and then you have to be able to reliably turn that on, and turn that off,” Adebayo told TechCrunch. “You can do it with existing models, but they’re very fragile… It’s kind of one of the big questions.”

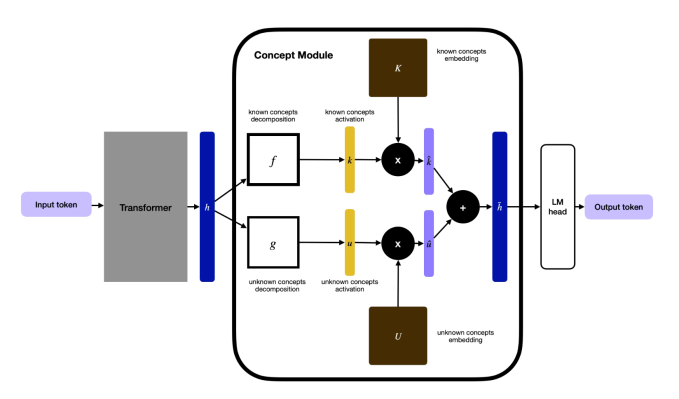

Adebayo began this work while earning his PhD at MIT, and co-authored a widely cited paper in 2020 that showed that current methods for understanding deep learning models were not reliable. This work ultimately led to the creation of a new way to build MBAs: developers insert a conceptual layer into the model that groups data into trackable categories. This required further annotation of the data, but using other AI models to help, they were able to train this model as the largest proof of concept to date.

“This kind of explainability that people are making is… the neuroscience model, and we’re flipping it,” Adebayo said. “What we’re doing is actually engineering the model from the ground up so that you don’t need to study the neuroscience.”

One concern about this approach is that it may eliminate some of the emergent behaviors that make LLMs so interesting: their ability to generalize in new ways about things they have not yet been trained to do. Adebayo says this is still happening in his company’s model: his team tracks what they call “discovered concepts” that the model has discovered on its own, such as quantum computing.

TechCrunch event

Boston, MA

|

June 9, 2026

This interpretable structure will be something everyone needs, Adebayo says. For consumer-facing LLM students, these technologies should allow model builders to do things like block the use of copyrighted material, or better control output on topics like violence or drug use. Regulated industries will require more manageable LLMs, for example in finance, where a rubric for loan applicants needs to consider things like financial records but not race. There is also a need for interpretability in scientific work, which is another area where Guide Labs has developed technology. Protein folding has been a huge success for deep learning models, but scientists need more insight into why their programs discover successful combinations.

“This model shows that training interpretable models is no longer a science; it is now an engineering problem,” Adebayo said. “We’ve got the science figured out and we can scale it, and there’s no reason why this kind of mismatches the performance of frontier-level models,” which have many parameters.

Guide Labs says the Steerling-8B can achieve 90% of the power of existing models, but uses less training data, thanks to its new architecture. The next step for the company, which spun out of Y Combinator and raised a $9 million seed round of seed capital in November 2024, is to build a larger model and start offering application programming interface (API) and agent access to users.

“The way we approach current training paradigms is very primitive, so democratizing inherent interpretability will actually be a good thing in the long run for our species,” Adebayo told TechCrunch. “As we go after these models that are going to be super intelligent, you don’t want anything making decisions for you that’s too vague for you.”

⚡ **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#Guide #Labs #debuts #type #interpretable #LLM**

🕒 **Posted on**: 1771869867

🌟 **Want more?** Click here for more info! 🌟