💥 Explore this insightful post from TechCrunch 📖

📂 **Category**: Apps,Social,social media,Instagram,Safety,Meta,parents,Teens

💡 **What You’ll Learn**:

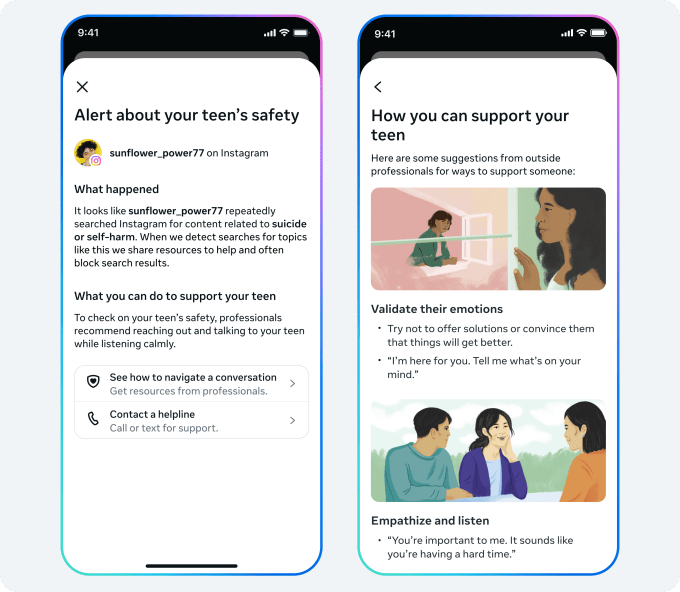

Instagram announced Thursday that it will begin alerting parents if their teenager repeatedly tries to search for terms related to suicide or self-harm within a short period of time. Alerts will launch in the coming weeks for parents enrolled in Instagram parental controls.

The Meta-owned social platform says that while it already blocks users from searching for suicide and self-harm content, these new alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for such content so they can support their teen.

Searches that may trigger a alert include phrases that encourage suicide or self-harm, phrases that indicate the teen may be at risk of harming themselves, and terms such as “suicide” or “self-harm.”

Instagram says parents will receive the alert via email, text or WhatsApp, depending on the contact information they provided, along with an in-app notification. The notice will include resources designed to help parents navigate conversations with their teens.

The move comes as Meta and other major tech companies currently face several lawsuits seeking to hold social media giants liable for harming teens.

While testifying in a lawsuit in the U.S. District Court for the Northern District of California this week, Instagram chief Adam Mosseri was grilled by prosecutors in an ongoing social media addiction case over the app’s delayed rollout of basic safety features, including a nudity filter for teens’ private messages.

Additionally, during testimony in a separate lawsuit before the Los Angeles County Superior Court, it was revealed that an internal Meta research study found that parental supervision and controls had little effect on children’s compulsive social media use. The study also found that children who experienced stressful life events were more likely to struggle to appropriately regulate their social media use.

Given the ongoing lawsuits accusing the company of failing to protect teens on its platforms, the timing of these new alerts isn’t entirely surprising.

The company indicates that it will aim to avoid sending these notifications unnecessarily, because excessive use may reduce their overall effectiveness.

“In working to strike this important balance, we analyzed Instagram search behavior and consulted experts from our Suicide and Self-Harm Advisory Group,” Instagram explained in a blog post. “We’ve chosen a threshold that requires just a few searches over a short period of time, while remaining on the side of caution. While this means we may occasionally notify parents when there is no real cause for concern, we feel — and experts agree — that this is the right starting point, and we will continue to monitor and listen to feedback to make sure we’re in the right place.”

The alerts will roll out in the US, UK, Australia and Canada next week, and will become available in other regions later this year.

In the future, Instagram plans to trigger these notifications when a teen tries to engage the app’s AI in conversations about suicide or self-harm.

💬 **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#Instagram #alerting #parents #teen #searches #suicidal #selfharm #content**

🕒 **Posted on**: 1772108292

🌟 **Want more?** Click here for more info! 🌟