🔥 Explore this insightful post from Hacker News 📖

📂 **Category**:

📌 **What You’ll Learn**:

This was the opening talk for the launch of Medact’s Briefing on Palantir in the NHS delivered along with Amnesty International, the Good Law Project, and Corporate Watch. Weeks after the briefing, UK parliament members began demanding the contract be scrapped. Rather not read? You can watch the briefing here.

I worked for many years in artificial intelligence companies. My job was to explain how AI and surveillance systems work for different kinds of clients, from the commercial sector all the way to the Pentagon. Some of the tools I helped bring to market are being used today by companies like Anthropic to access federal contracts, and many of them are actively being used in war zones.

We’re seeing the consequences of their use at an unprecedented scale. It’s actually tragic to be in a conversation about Palantir’s use in the NHS today — a national healthcare system — after seeing the kind of war crimes that Palantir’s tools are involved in around the world, especially the massacre of almost 200 children in the Iranian city of Minab.

We need to understand how we got here. We also need to recognize that we don’t have to be engineers or technical experts to understand how these systems work. We need to take back a lot of the language that has been used to describe and market these tools. We need to find ways to grasp the invisible architectures of these platforms and scrutinize them, whether we’re workers, policymakers, or legal professionals.

There’s a lot of work to do. My quick introduction here will hopefully help you look at cloud surveillance platforms in a way that is simpler than the narratives pushed by marketing and hype-men. I want to look at AI software from a more philosophical and structural point of view, from a language point of view. I often wondered why I was the loudest of voices who formerly worked at Palantir, especially as an English major, as someone who’s not an engineer. I think it’s because, fundamentally, I was in charge of lying about these systems, of deploying language that I would later realize misrepresented the real world consequences of the widespread adoption of AI tools.

I believe it’s up to people who are not engineers, especially people who are professionals implementing these systems, to challenge surveillance and AI. A friend who knows nothing about AI asked me the other day what singularity will look like—what will happen when the machines take over. I honestly could not answer the question. But he actually answered it himself better than anyone I know. He said, “I don’t think we will know how AI will take over the world, or the ways in which it will harm us, because it’ll be so much more advanced than us that we won’t be able to understand it or perhaps even perceive it.”

Understanding — educating each other, and educating ourselves — is the most important thing we can do in order to challenge these systems. And this is my intention: to challenge and reclaim language for workers, for healthcare professionals, and whoever we may be reading or watching this talk.

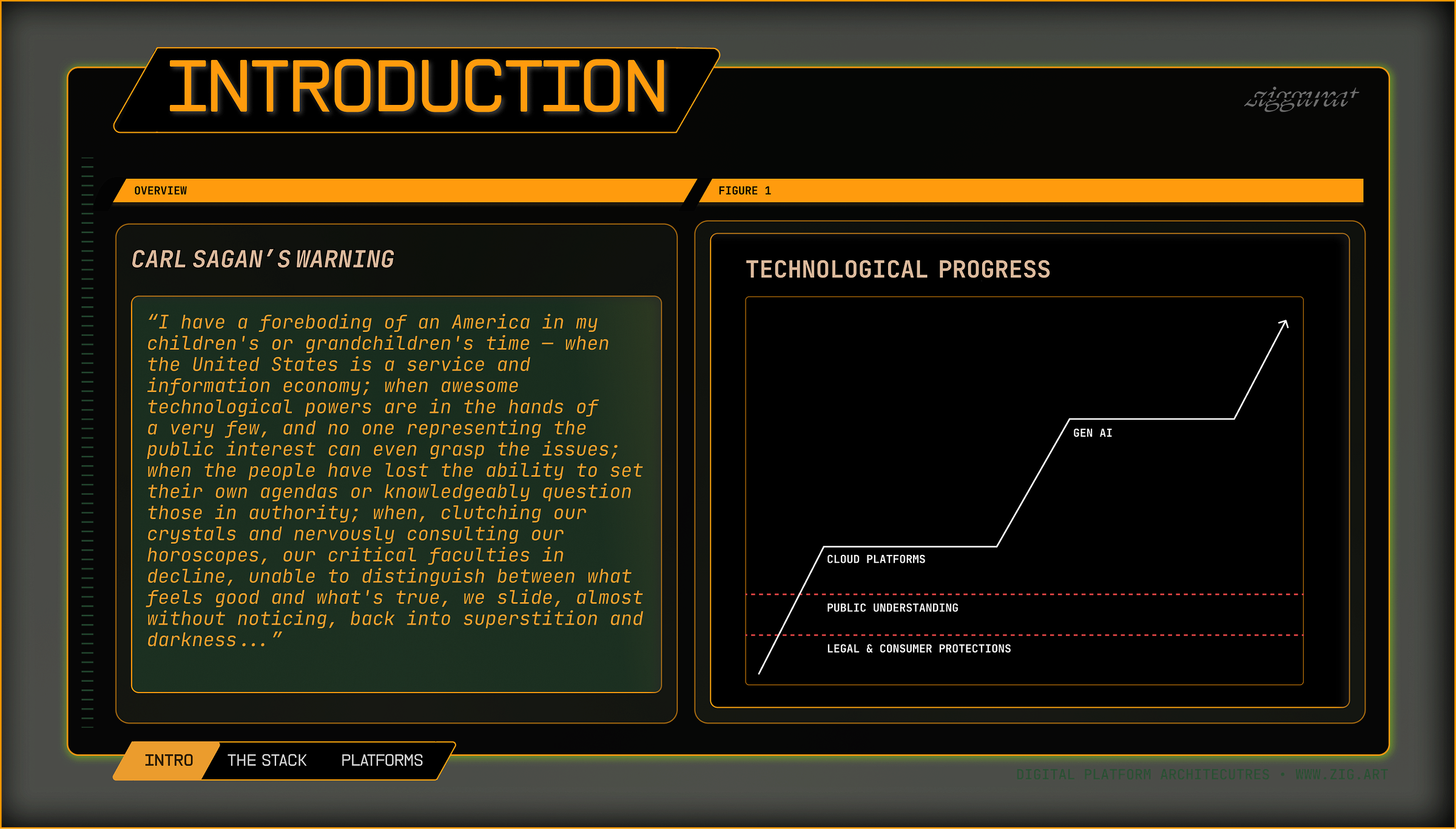

Let’s start with the idea that technological progress has moved extremely fast today. We’ve long been warned about this; Eisenhower cautioned about the rise of the military-industrial complex taking over America in his farewell address. Carl Sagan, in his book The Demon-Haunted World, warns about a world in which the information economy takes over our institutions, and people are unable to challenge them or understand how they work. In this world, people enter a kind of darkness, a place of magic where people don’t understand how decisions are being made, or how the world is being shaped around them.

We’ve seen some major revolutions in the past 20 years, one of them being the proliferation of cloud platforms. These consumer or business-to-business (B2B) cloud platforms are taking over our information space and the way we communicate and do our work every day. More recently, Gen AI has added even more complexity to these tools. However, public understanding is lagging far behind these systems and how they work, and the legal and consumer protections that would protect the public are also lagging behind their development.

Complicating matters is that everybody who talks about AI and these data architectures has a different way of talking about them, often depending on their intention. There’s technical jargon, military language, marketing language, and academic language. I lean on the academic. I think we’ve made the mistake of following the lead of the CEOs of these companies and their narratives too closely, especially by using their own language to challenge them. What is most important is to find shared language that everybody can understand in order to describe these systems and their consequences.

That’s why I’m going to do a bit of an exercise in reclaiming language. Palantir as a company has a habit of taking IP and words that aren’t theirs and using them for marketing — the main example being their name. I’m going to go into two of those words, “Palantir” and “ontology,” and see if we can reclaim them.

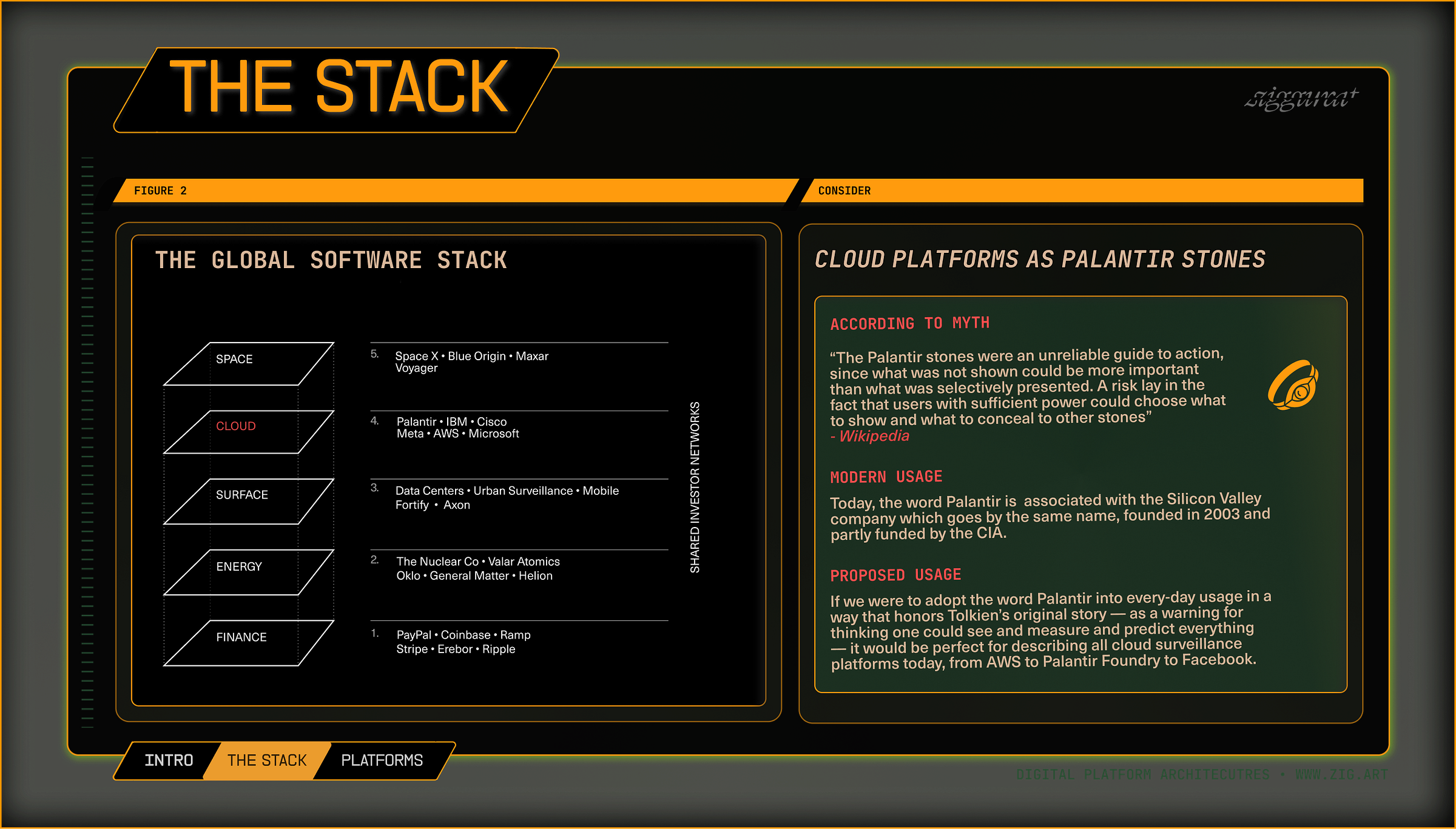

“Today, we associate Palantir with the company, but as a generic term, it would be well-suited to describe all sorts of companies that collect data and give users surveillance and predictive power. Many of these companies — or “Palantir stones” — adopt similar architectures, process data in similar ways, and offer this dangerous possibility of surveillance and prediction that might not be grounded in reality.”

Let’s start with the word “Palantir.” Many of us probably know that it’s a loan word from J.R.R. Tolkien’s Lord of the Rings, where it describes all-seeing stones in Middle-earth that allow users to see remote locations and possibly predict the future. The problem with these stones in Tolkien’s works is that they often lead to overconfidence in what they show. They mislead people who make decisions based on knowledge and not wisdom. It’s a very intentional naming — after all, Palantir’s tools provide that capability for remote viewing, predictive analytics, and decision-making — but the warning is already in the name.

Today, we associate Palantir with the company, but as a generic term, it would be well-suited to describe all sorts of companies that collect data and give users surveillance and predictive power. Many of these companies — or modern “Palantir stones” — adopt similar architectures, process data in similar ways, and offer this dangerous possibility of surveillance and prediction that might not be grounded in reality.

Across finance, energy, cloud infrastructure — and even outer space — private companies are pushing software into every part of society. This leads to what Francesca Bria calls the “privatization of sovereignty” — where we lose sovereignty by slowly delegating decisions and actions to private tech companies across all sectors our public and private lives. Cloud platforms like Palantir form a specific layer in a wider global information system, or computational “stack.” Specifically, Palantir sits at the cloud layer, coordinating data from different surveillance sources and helping make decisions through databases, algorithms, and applications.

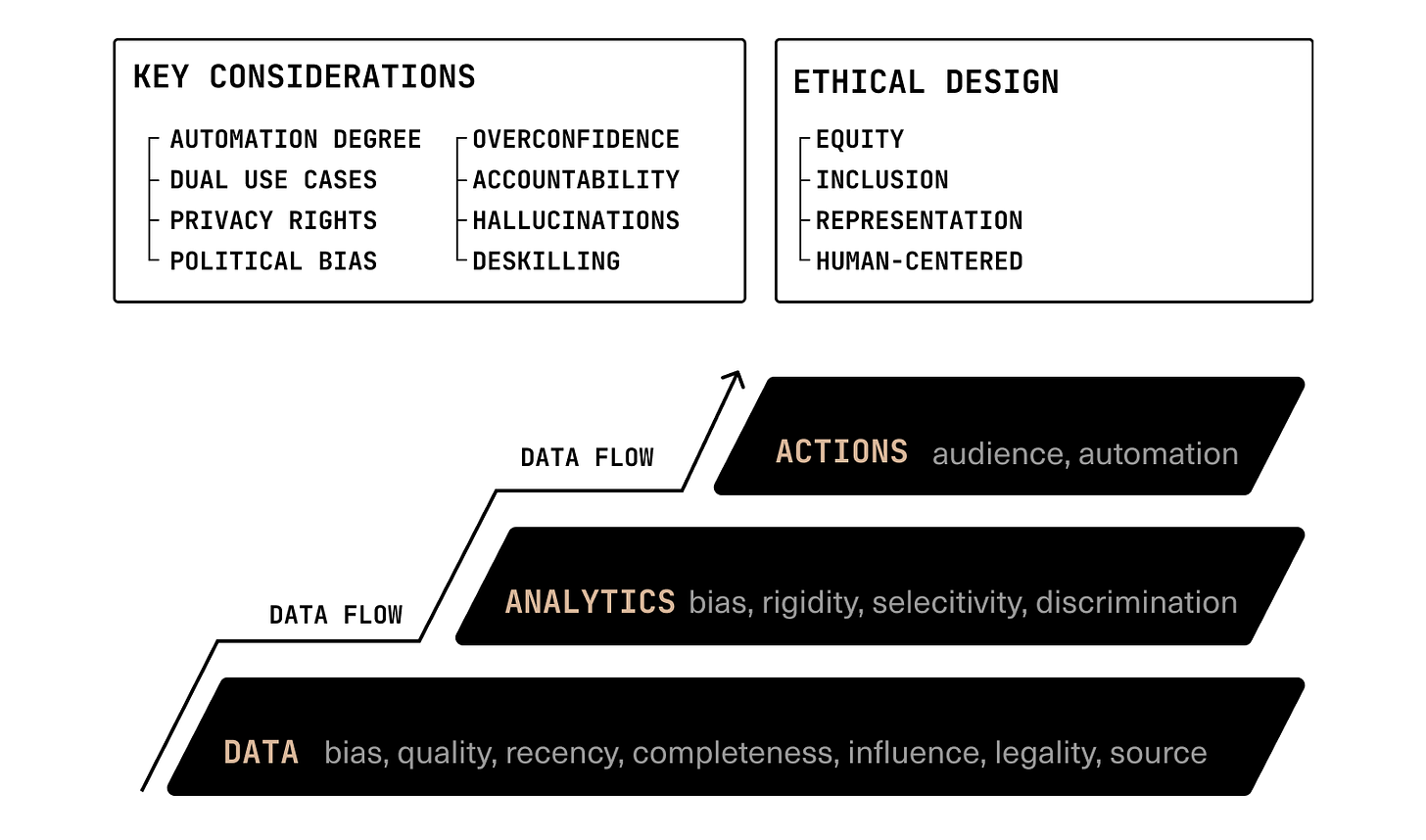

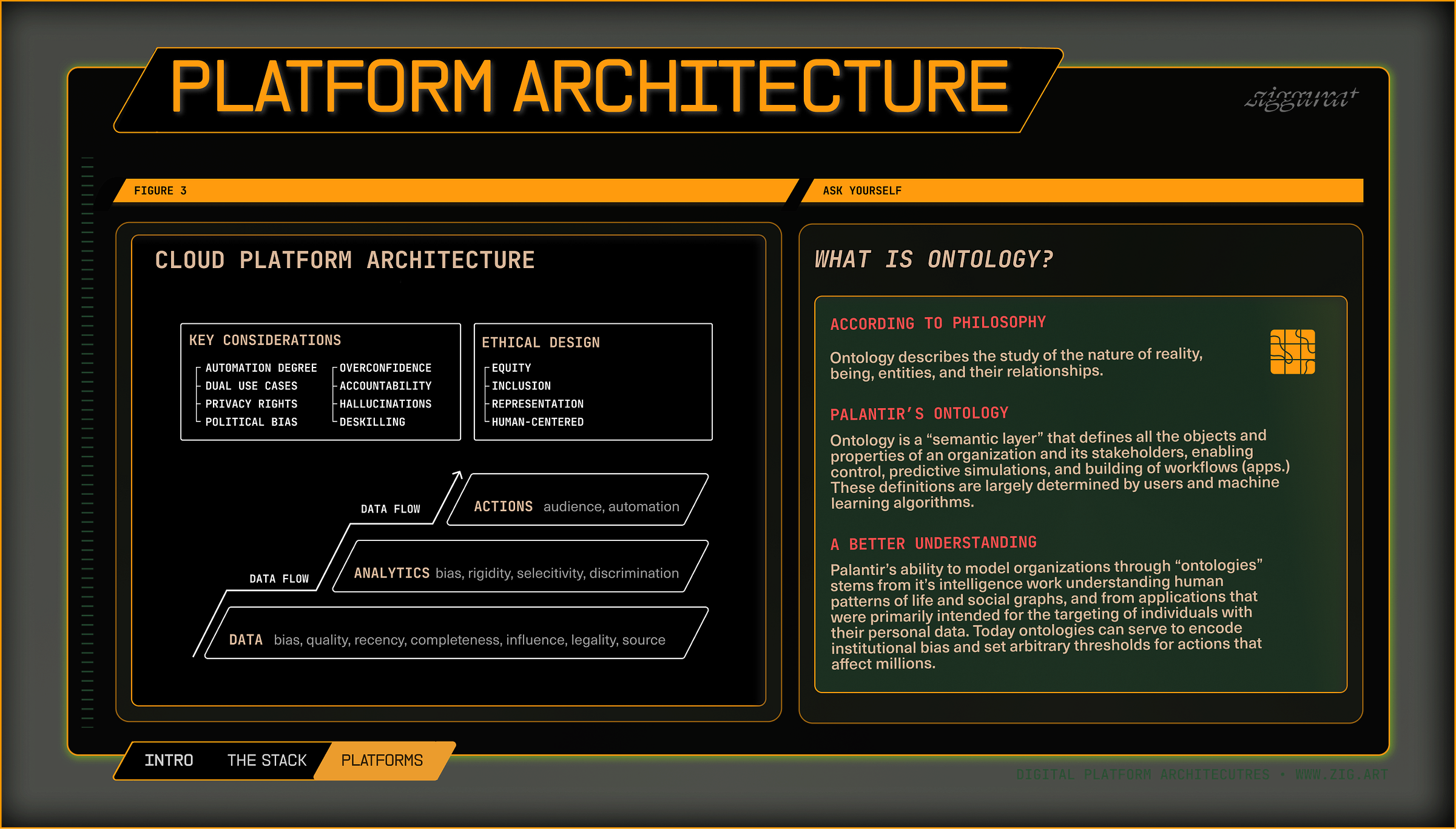

Let’s look at how cloud surveillance systems at this layer of the stack, like Palantir, are structured. Most cloud surveillance platforms (often sold as B2B SaaS) follow a similar input-output data model, one which starts with the data that goes into the system, and ends with the automations and decisions (also known as “solutions” or “use-cases”) that come out of that system.

Think of it as a pyramid, with the most important layer, the data coming into the system, at the bottom. At the data stage, we have to consider bias, quality, recency, completeness, legality, and sourcing of the data that enters the platform. This is the most critical point of scrutiny, forming the foundation from which all decisions and automations are based upon.

The next layer is the analytics layer. This is where bias, rigidity, selectivity (cherry-picking), and discrimination can happen. This is where meaning is made from data, where analysis tools are used, and where thresholds for classification determine what counts as what within the “ontology,” or simulation, of an organization. It’s important to examine how tools at this stage are designed and how they influence decision-making, as ideology and tools can heavily influence data interpretation.

The final layer is the action and automation layer — where data and analytics turn into decisions and automated processes, and where human roles are delegated to machines. The key questions to ask here are: who is affected? Are outcomes equitable and fair to under-represented populations? And how much of the decision-making process was automated vs. handled by humans?

Nearly all modern B2B cloud software works this way. Whereas most software companies focus on a particular industry, and establish their ground through proprietary data and solutions (which they then use to establish a “moat” around their business) Palantir allows any organization to build these pipelines — from data, to analytics, to apps — in an agnostic fashion on any data they own. It’s really important to scrutinize the tools that government is building with these platforms, starting with the types of data involved, then moving to the design of systems, and eventually to how they influence decision-making and determine outcomes in real-life.

When you consider these companies — whether it’s Palantir that’s being pushed at your organization or another surveillance platform that takes this same model of promising to integrate your data, offer analytics and actions — you have to realize that their main incentives are to make money. They aim for what they call vertical integration. They want to sell their tools across multiple departments and roles and automate as many functions as possible to make organizations dependent on them. They give away their software for free or at a very low cost, often exploiting emergencies or disasters, and then the costs scale exponentially with usage as an organization becomes dependent on their tools.

In the end, however, these tools ultimately lead to accountability loss. As these systems become more complex, it becomes harder to trace which decisions are automated, while overconfidence in outputs combined with gradual organizational deskilling ends up degrading results. With Gen AI added across all layers, accountability becomes even harder. Gen AI can hallucinate and operates partly independently of organizational data, bringing in external biases that can’t be traced. So-called “agentic” systems that leverage multiple Gen AI models, often organized in highly convoluted setups, diffuse responsibility at an exponential scale.

“ Palantir’s ontology can be reconfigured to harm people and to reflect the political goals of authoritarian governments… an indication that we should come to see these ‘ontologies’ as weapons to enforce world-views upon people without democratic consent.

The second Palantirism that I want to reclaim is the word “ontology.” I think it is important to understand this word because Palantir talks about it as if it’s their intellectual property, this “semantic layer” that helps create a simulation of an organization. But what’s important to remember is that ontology is a philosophical term, a data informatics term. It describes how people and institutions make sense of reality and the relationships between entities in reality. Palantir’s ontology supposedly sits at the analytic layer of a cloud platform and helps make sense of data in such a way.

The problem today is that this “ontology” is exactly where political ideology is injected into governance systems, and that’s why we have to understand the history of these tools. The ability to model and simulate organizations comes originally from Palantir’s ability to detect life patterns of individuals and conduct investigations on social graphs of their relationships. By first investigating people for intelligence agencies and finding ways of weaponizing their data against them, Palantir was able to start simulating things — creating ontologies of the world — defining what constitutes people and events and their relationships (known as edges and nodes). It then moved on to building ontologies and simulations out of all sorts of data from all of its clients. Nonetheless, we have to remember that the roots of this organizational simulation technology lie in targeting processes that weaponize people’s personal data against them, and we have to recognize that these ontologies, through the categories they encode, can also allow governments to institutionalize bias against those same people.

In the United States today, Palantir is actively being used by agencies to police and enact executive orders related to gender ideology and trans healthcare, and to end programs and research related to diversity, equity, and inclusion. Using Palantir’s tools, these keywords have become a thing to be extinguished out of the United States government and its grants. Palantir’s platforms have helped lead to the massive firing of employees who are working on research connected to these areas of study, and it has less led to the cancellation of millions, if not billions, of dollars in related research.

But what really startles me is that these ideas — of equity, of inclusion, of representation — are all very important for the design of responsible governance platforms that account for people and for their real needs. Instead, Palantir has chosen to help the Trump administration dismantle these ideals themselves, which are classic Western democratic concepts. To me that really shows how Palantir’s ontology can be reconfigured to harm people and to reflect the political goals of authoritarian governments, an indication that we should come to see these “ontologies” as weapons to enforce world-views upon people without democratic consent.

Another example of how data systems could be used to discriminate people was made evident when Trump declared the existence of only two official genders in the United States. When a third gender category ceased to exist at the information platforms at the border, some people — because their gender category was no longer recognized in America’s border “ontology” — had issues returning to their home country.

These platform ontologies, these definitions of what is what, which end up determining who is recognized, how resources are allocated, and how threats are detected, are made not only through AI classification algorithms, but through people — the designers of these systems. Together they allow bias to be established through algorithmic thresholds that classify people as targets, or risks, or subject to any action that could affect their lives. The most dangerous aspects of these tools is 1.) that they allow institutional bias to be encoded in software architecture, and embodied in the real world, and 2.) their dual-use nature: civilian commercial applications which handle the personal data of people can almost always be weaponized in military or law enforcement contexts.

“Magic produces, or pretends to produce, an alteration in the Primary World. It does not matter by whom it is said to be practiced, fay or mortal, it remains distinct from the other two; it is not art but a technique; its desire is power in this world, domination of things and wills…

The more potent and specially elvish craft I will for lack of a less debatable word, call Enchantment. Enchantment produces a Secondary World into which both designer and spectator can enter, to the satisfaction of their senses while they are inside; but in its purity it is artistic in desire and purpose.

JRR Tolkien, On Fairy-Stories

JRR Tolkien was a prolific student of the humanities, whose mind was able to create a simulated fantasy based on the richness of human cultures and languages in Middle-earth. Today, however, the company that bears the name of his creation, Palantir, continues to wage a public war on the humanities, liberal arts universities, and students. Meanwhile, it is pushing for the very type of global conflict that inspired Tolkien to write about the horrors of war, and the battle between good and evil, in the first place.

The company has also been very forthright recently about what kind of fantasy it wants to enact in the world, and what “ontology” it wishes to push in order to evaluate cultures, nations, and people in order to deliver them to justice: one based on white supremacy, colonialism, and corporate control. It’s up to us whether we allow this government to manifest this vision, as I warned over a year ago, or whether we relegate it, by market and political means, to the status of a fairy-tale.

In the realm of culture, the betrayal of the legacy of Tolkien can only be corrected by reclaiming language, something we can accomplish by heeding the original warning of the palantir stones: believing one can predict the future or truly understand reality from a single pane of glass or technology is a flawed and doomed endeavor, no matter the platform.

Therefore, the word “palantir” should not denote or be owned by a specific company, but serve to describe any of all platforms that allow Big Tech and governments to collect, analyze, and automate data to exploit people, discriminate them, and predict their behavior. These platforms, which are convincing a small group of people that they can see the future and prescribe everything in our society — can all be understood and challenged better if seen as leading us down the same dark path of the mythical palantíri.

Above all, JRR Tolkien’s works remind us that we do not need to accept a worldview, or “ontology,” from above, especially from a corporation or a magical stone. It is the evil wizards of Middle Earth which aim to push their will and domination through magic, while the original elves once ruled through enchantment — guarding the Palantir stones with fellowship, strict rituals, and careful traditions to control their power.

When we are subjected to darkness, magic, and the unknown “demon-haunted” world — meaning and purpose comes from struggle, from first-hand experience, from community, and from developing a shared understanding of our shared reality, as we have done in this exercise. By challenging pre-existing notions, narratives, and fairy-tales pushed by Big Tech, we can replace them with our own visions.

As Tolkien writes in his essay, On Fairy Stories, “Fantasy can, of course, be carried to excess. It can be ill done. It can be put to evil uses. It may even delude the minds out of which it came. Men have made false gods out of other materials: their notions, their banners, their monies; even their sciences and their social and economic theories have demanded human sacrifice…Fantasy remains a human right.”

Note: My content is currently being filtered by nearly every social media platform at the moment except for Instagram — so your sharing counts and helps get the word out! Thank you for reading.

* This pamphlet letter, a protected creative work, is addressed to the American people out of civic duty and concern and protected under the First Amendment.

{💬|⚡|🔥} **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#time #reclaim #word #Palantir #J.R.R #Tolkien**

🕒 **Posted on**: 1776920574

🌟 **Want more?** Click here for more info! 🌟