✨ Explore this trending post from TechCrunch 📖

📂 **Category**: AI,ai models,multiverse computing,compactifAI

✅ **What You’ll Learn**:

With the default rate at private companies rising to 9.2% – the highest rate in years – venture capital firm Lux Capital recently advised AI-driven companies to get confirmation of their computing power commitments in writing. As financial instability spreads across the AI supply chain, Lux warns that a handshake agreement is not enough.

But there is another option entirely, which is to stop relying on external computing infrastructure altogether. Smaller AI models that run directly on a user’s own machine — without a data center, cloud provider, or counterparty risk — are now good enough to be worth considering. Multiverse computing raises its hand.

The Spanish startup has so far maintained a lower profile than some of its peers, but as demand for AI efficiency grows, this is changing. After compressing models from major AI labs including OpenAI, Meta, DeepSeek, and Mistral AI, it has launched an app showcasing the capabilities of its compressed models and an API gateway — a gateway that allows developers to access and build on these models — making them more widely available.

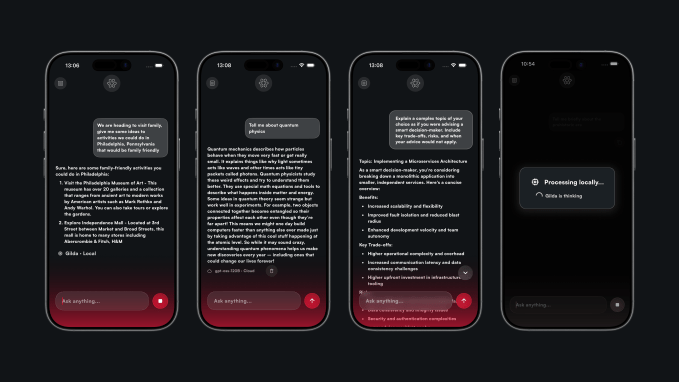

CompactifAI, which shares its name with Multiverse’s quantum-inspired compression technology, is an AI-powered chat tool similar to Mistral’s ChatGPT or Le Chat. Ask a question, and the model answers. The difference is that Multiverse is built into Gilda, a model so small that it can run locally and offline, according to the company.

For end users, this is an AI experience at the edge, with data that never leaves their device and requires no connectivity. But there’s a caveat: Their mobile devices must have enough RAM and storage space. If it doesn’t — and many older iPhones won’t — the app will switch back to cloud-based models via an application programming interface (API). The routing between on-premises and cloud processing is handled automatically by a system Multiverse has dubbed Ash Nazg, whose name will ring a bell to Tolkien fans because it refers to the One Ring cameo in “The Lord of the Rings.” But when an app heads to the cloud, it loses a key privacy advantage in the process.

These limitations mean that CompactifAI isn’t quite ready for widespread adoption by customers yet, although that was probably never the goal. According to data from Sensor Tower, the app had less than 5,000 downloads in the past month.

The real target is companies. Today, Multiverse launches a self-service API portal that gives developers and organizations direct access to its compressed models – without the need for the AWS Marketplace.

TechCrunch event

San Francisco, California

|

October 13-15, 2026

CompactifAI API Gateway 1773908581 “It gives developers direct access to compressed models with the transparency and control needed to run them in production,” CEO Enrique Lizaso said in a statement.

Real-time usage monitoring is one of the main features of the API, and this is no coincidence. Beyond the potential advantages of deploying at the edge, lower compute costs are one of the main reasons organizations are considering smaller models as an alternative to large language models (LLMs).

It also helps that small models are less limited than they used to be. Earlier this week, Mistral updated its Small family of models with the launch of Mistral Small 4, which it says is simultaneously optimized for general chat, programming, agent tasks, and reasoning. The French company also released Forge, a system that allows organizations to create custom models, including mini-models from which they can choose which trade-offs their use cases can best tolerate.

Recent findings from Multiverse also suggest that the gap with LLMs is narrowing. Its latest compact model, the HyperNova 60B 2602, is built on gpt-oss-120b — an OpenAI model whose base code is publicly available. The company claims that it now delivers faster responses at a lower cost than the original version from which it was derived, a particularly important feature for agent coding workflows, where AI completes complex, multi-step programming tasks autonomously.

Making models small enough to work on mobile devices while maintaining their usefulness is a huge challenge. Apple Intelligence has avoided this problem by combining the on-device model with the cloud model. Multiverse’s CompactifAI app can also route requests to gpt-oss-120b via an API, but its main goal is to show that homegrown models like Gilda and its future replacements have benefits beyond cost savings.

For workers in critical fields, a model that can run on-premises without connecting to the cloud provides greater privacy and flexibility. But the greatest value lies in the commercial use cases this could open up – for example, integrating AI into drones, satellites and other settings where connectivity cannot be taken for granted.

The company already serves more than 100 global clients including Bank of Canada, Bosch and Iberdrola, but expanding its client base could help it unlock more financing. After raising a $215 million Series B last year, it is now rumored to raise a new €500 million funding round at a valuation of more than €1.5 billion.

⚡ **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#Multiverse #Computing #pushing #compact #models #mainstream**

🕒 **Posted on**: 1773908581

🌟 **Want more?** Click here for more info! 🌟