✨ Explore this must-read post from TechCrunch 📖

📂 **Category**: AI,Apps,Media & Entertainment,ai deepfakes,Creators,deepfake,Google,YouTube

✅ **What You’ll Learn**:

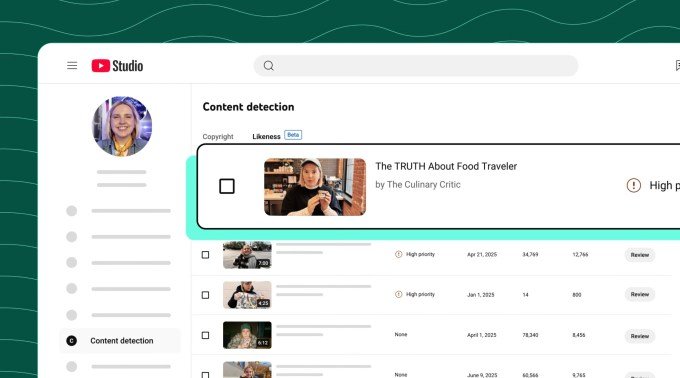

YouTube announced Tuesday that it is expanding its similarity detection technology, which identifies artificial intelligence-generated deepfakes, to include a pilot group of government officials, political candidates and journalists. Members of the beta group will have access to a tool that detects unauthorized AI-generated content and allows them to request its removal if they believe it violates YouTube policy.

The technology itself was rolled out last year to about 4 million YouTube creators in the YouTube Partner Programme, after earlier tests.

Similar to YouTube’s existing Content ID system, which detects copyrighted material in videos uploaded by users, the similarity detection feature looks for simulated faces made using AI tools. These tools are sometimes used to try to spread misinformation and manipulate people’s perceptions of reality, as they leverage deep-fake personas of prominent figures — such as politicians or other government officials — to say and do things in these AI videos that they would not do in real life.

Through the new pilot program, YouTube aims to balance users’ freedom of expression with the risks associated with artificial intelligence technology that can generate a convincing image of a public figure.

“This expansion is really about the integrity of the public conversation,” Leslie Miller, YouTube’s vice president of government affairs and public policy, said in a pre-launch press conference on Tuesday. She added: “We know that the risks of AI impersonation are particularly high for those working in the civilian field. But as we introduce this new shield, we are also being careful about how we use it.”

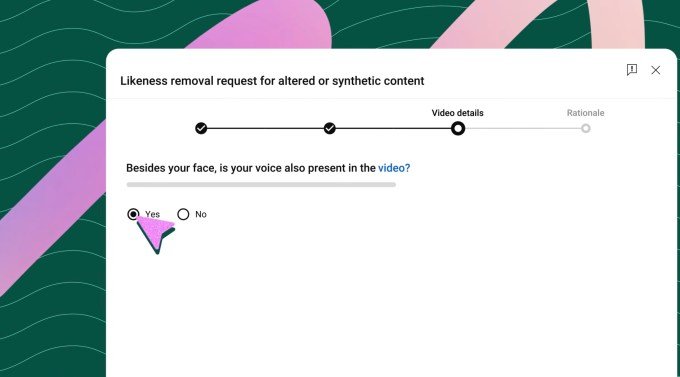

Miller explained that not all detected matches will be removed upon request. Instead, YouTube will evaluate each request under its current privacy policy guidelines to determine whether the content is parody or political criticism, which are protected forms of free expression.

The company noted that it is advocating for these protections at the federal level as well, through its support of D.C.’s NO FAKES Act, which would regulate the use of artificial intelligence to create unauthorized recreations of an individual’s voice and visual likeness.

To use the new tool, eligible beta testers must first prove their identity by uploading a selfie and government ID. They can then create a profile, view the matches that appear, and optionally request their removal. YouTube says it plans to eventually give people the ability to block uploads of violating content before it goes live, or perhaps allow them to monetize those videos, similar to how its Content ID system works.

The company did not confirm which politicians or officials would be among those conducting its initial testing, but said the goal was to make the technology widely available over time.

AI-powered videos will be labeled as such, but the placement of these labels is inconsistent. For some, the label appears in the video description, while videos that focus on more “sensitive topics” will apply the label to the introduction of the video. This is the same approach YouTube takes with all AI-generated content.

“There’s a lot of content that’s been produced with AI, but that distinction isn’t actually intrinsic to the content itself,” explained Amjad Hanif, YouTube’s vice president of creator products, regarding brand positioning. “It could be a cartoon created using artificial intelligence. So I think there’s a judgment call as to whether that’s a category that deserves a very clear disclaimer,” he added.

YouTube does not currently share the number of takedowns of these types of AI deepfakes that have been managed by the deepfake detection technology in the hands of content creators, but noted that the amount of content removed so far has been “very small.”

“I think for many [creators]“It’s just been an awareness of what’s being generated, but the volume of takedown requests is actually really low because most of them turn out to be fairly benign or additive to their overall business,” Hanif said.

This may not be the case with deepfakes of government officials, politicians, or journalists.

Over time, YouTube intends to bring its deepfake detection technology to more areas, including recognizable spoken voices and other intellectual property such as popular personalities.

⚡ **What’s your take?**

Share your thoughts in the comments below!

#️⃣ **#YouTube #expands #deepfake #detection #include #politicians #government #officials #journalists**

🕒 **Posted on**: 1773228251

🌟 **Want more?** Click here for more info! 🌟